moabb.datasets.Kalunga2016#

- class moabb.datasets.Kalunga2016(subjects=None, sessions=None)[source]#

Bases:

BaseDataset[source]Dataset Snapshot

Kalunga2016

Online SSVEP-based BCI using Riemannian geometry for assistive robotics with shared control scheme

SSVEP, 4 classes (13 vs 17 vs 21 vs rest)

Class Labels: 13, 17, 21, rest

Benchmark Context

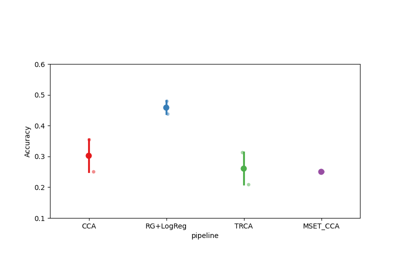

WithinSessionIncluded in 1 MOABB benchmark table(s). Scores are across available pipelines (WithinSession accuracy).

- SSVEP all classes 6 pipelinesMax 70.89 · Median 47.17 · Mean 47.27 · Std 25.18

Citation & Impact

- Paper DOI10.1016/j.neucom.2016.01.007

- CitationsLoading…

- Public APICrossref | OpenAlex

- MOABB tables1 (WithinSession)

- Page Views30d: 38 · all-time: 369#26 of 151 · Top 18% most viewedUpdated: 2026-03-20 UTC

HED Event TagsHED tagsSource: MOABB BIDS HED annotation mapping.

13Sensory-eventExperimental-stimulusVisual-presentationLabel17Sensory-eventExperimental-stimulusVisual-presentationLabel21Sensory-eventExperimental-stimulusVisual-presentationLabelrestExperiment-structureRestHED tree view

Tree · 13

├─ Sensory-event ├─ Experimental-stimulus ├─ Visual-presentation └─ Label

Tree · 17

├─ Sensory-event ├─ Experimental-stimulus ├─ Visual-presentation └─ Label

Tree · 21

├─ Sensory-event ├─ Experimental-stimulus ├─ Visual-presentation └─ Label

Tree · rest

├─ Experiment-structure └─ Rest

Channel SummaryTotal channels8EEG8 (EEG)Montage10-05Sampling256 HzReferenceright mastoidNotch / line50 HzThis diagram is automatically generated from MOABB metadata. Please consult the original publication to confirm the experimental protocol details.

SSVEP Exo dataset.

SSVEP dataset from E. Kalunga PhD in University of Versailles [1].

The datasets contains recording from 12 male and female subjects aged between 20 and 28 years. Informed consent was obtained from all subjects, each one has signed a form attesting her or his consent. The subject sits in an electric wheelchair, his right upper limb is resting on the exoskeleton. The exoskeleton is functional but is not used during the recording of this experiment.

A panel of size 20x30 cm is attached on the left side of the chair, with 3 groups of 4 LEDs blinking at different frequencies. Even if the panel is on the left side, the user could see it without moving its head. The subjects were asked to sit comfortably in the wheelchair and to follow the auditory instructions, they could move and blink freely.

A sequence of trials is proposed to the user. A trial begin by an audio cue indicating which LED to focus on, or to focus on a fixation point set at an equal distance from all LEDs for the reject class. A trial lasts 5 seconds and there is a 3 second pause between each trial. The evaluation is conducted during a session consisting of 32 trials, with 8 trials for each frequency (13Hz, 17Hz and 21Hz) and 8 trials for the reject class, i.e. when the subject is not focusing on any specific blinking LED.

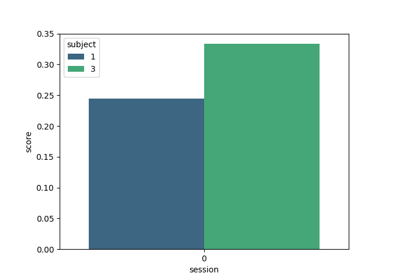

There is between 2 and 5 sessions for each user, recorded on different days, by the same operators, on the same hardware and in the same conditions.

References

[1]Emmanuel K. Kalunga, Sylvain Chevallier, Quentin Barthelemy. “Online SSVEP-based BCI using Riemannian Geometry”. Neurocomputing, 2016. arXiv report: https://arxiv.org/abs/1501.03227

from moabb.datasets import Kalunga2016 dataset = Kalunga2016() data = dataset.get_data(subjects=[1]) print(data[1])

Dataset summary

#Subj

12

#Chan

8

#Classes

4

#Trials / class

16

Trials length

2 s

Freq

256 Hz

#Sessions

1

Participants

Population: healthy

Equipment

Amplifier: g.tec MobiLab

Electrodes: EEG

Montage: standard_1005

Reference: right mastoid

Data Access

DOI: 10.1016/j.neucom.2016.01.007

Data URL: https://zenodo.org/record/2392979

Repository: Zenodo

Experimental Protocol

Paradigm: ssvep

Feedback: none

Stimulus: flickering

Notes

Note

Kalunga2016was previously namedSSVEPExo.SSVEPExowill be removed in version 1.1.The events notation 17Hz and 21Hz were swapped after an investigation conducted by ponpopon at Github.

The dataset includes recordings from 12 healthy subjects.

Added in version 1.2.0.

- property all_subjects#

Full list of subjects available in this dataset (unfiltered).

- convert_to_bids(path=None, subjects=None, overwrite=False, format='EDF', verbose=None, generate_figures=False)[source]#

Convert the dataset to BIDS format.

Saves the raw EEG data in a BIDS-compliant directory structure. Unlike the caching mechanism (see

CacheConfig), the files produced here do not contain a processing-pipeline hash (desc-<hash>) in their names, making the output a clean, shareable BIDS dataset.- Parameters:

path (str | Path | None) – Directory under which the BIDS dataset will be written. If

Nonethe default MNE data directory is used (same default as the rest of MOABB).subjects (list of int | None) – Subject numbers to convert. If

None, all subjects insubject_listare converted.overwrite (bool) – If

True, existing BIDS files for a subject are removed before saving. Default isFalse.format (str) – The file format for the raw EEG data. Supported values are

"EDF"(default),"BrainVision", and"EEGLAB".verbose (str | None) – Verbosity level forwarded to MNE/MNE-BIDS.

generate_figures (bool) – If

True, generate interactive neural signature HTML figures in{bids_root}/derivatives/neural_signatures/. Requiresplotly(pip install moabb[interactive]). Default isFalse.

- Returns:

bids_root – Path to the root of the written BIDS dataset.

- Return type:

Examples

>>> from moabb.datasets import AlexMI >>> dataset = AlexMI() >>> bids_root = dataset.convert_to_bids(path='/tmp/bids', subjects=[1])

See also

CacheConfigCache configuration for

get_data().moabb.datasets.bids_interface.get_bids_rootReturn the BIDS root path.

Notes

Added in version 1.5.

- data_path(subject, path=None, force_update=False, update_path=None, verbose=None)[source]#

Get path to local copy of a subject data.

- Parameters:

subject (int) – Number of subject to use

path (None | str) – Location of where to look for the data storing location. If None, the environment variable or config parameter

MNE_DATASETS_(dataset)_PATHis used. If it doesn’t exist, the “~/mne_data” directory is used. If the dataset is not found under the given path, the data will be automatically downloaded to the specified folder.force_update (bool) – Force update of the dataset even if a local copy exists.

update_path (bool | None Deprecated) – If True, set the MNE_DATASETS_(dataset)_PATH in mne-python config to the given path. If None, the user is prompted.

verbose (bool, str, int, or None) – If not None, override default verbose level (see

mne.verbose()).

- Returns:

path – Local path to the given data file. This path is contained inside a list of length one, for compatibility.

- Return type:

- download(subject_list=None, path=None, force_update=False, update_path=None, accept=False, verbose=None)[source]#

Download all data from the dataset.

This function is only useful to download all the dataset at once.

- Parameters:

subject_list (list of int | None) – List of subjects id to download, if None all subjects are downloaded.

path (None | str) – Location of where to look for the data storing location. If None, the environment variable or config parameter

MNE_DATASETS_(dataset)_PATHis used. If it doesn’t exist, the “~/mne_data” directory is used. If the dataset is not found under the given path, the data will be automatically downloaded to the specified folder.force_update (bool) – Force update of the dataset even if a local copy exists.

update_path (bool | None) – If True, set the MNE_DATASETS_(dataset)_PATH in mne-python config to the given path. If None, the user is prompted.

accept (bool) – Accept licence term to download the data, if any. Default: False

verbose (bool, str, int, or None) – If not None, override default verbose level (see

mne.verbose()).

- get_additional_metadata(subject: str, session: str, run: str) None | DataFrame[source]#

Load additional metadata for a specific subject, session, and run.

This method is intended to be overridden by subclasses to provide additional metadata specific to the dataset. The metadata is typically loaded from an events.tsv file or similar data source.

- get_block_repetition(paradigm, subjects, block_list, repetition_list)[source]#

Select data for all provided subjects, blocks and repetitions.

subject -> session -> run -> block -> repetition

See also

BaseDataset.get_data

- get_data(subjects=None, cache_config=None, process_pipeline=None)[source]#

Return the data corresponding to a list of subjects.

The returned data is a dictionary with the following structure:

data = {'subject_id' : {'session_id': {'run_id': run} } }

subjects are on top, then we have sessions, then runs. A sessions is a recording done in a single day, without removing the EEG cap. A session is constitued of at least one run. A run is a single contiguous recording. Some dataset break session in multiple runs.

Processing steps can optionally be applied to the data using the

*_pipelinearguments. These pipelines are applied in the following order:raw_pipeline->epochs_pipeline->array_pipeline. If a*_pipelineargument isNone, the step will be skipped. Therefore, thearray_pipelinemay either receive amne.io.Rawor amne.Epochsobject as input depending on whetherepochs_pipelineisNoneor not.- Parameters:

subjects (List of int) – List of subject number

cache_config (dict | CacheConfig) – Configuration for caching of datasets. See

CacheConfigfor details.process_pipeline (Pipeline | None) – Optional processing pipeline to apply to the data. To generate an adequate pipeline, we recommend using

moabb.utils.make_process_pipelines(). This pipeline will receivemne.io.BaseRawobjects. The steps names of this pipeline should be elements ofStepType. According to their name, the steps should either return amne.io.BaseRaw, amne.Epochs, or anumpy.ndarray(). This pipeline must be “fixed” because it will not be trained, i.e. no call tofitwill be made.

- Returns:

data – dict containing the raw data

- Return type:

Dict

- property metadata: DatasetMetadata | None[source]#

Return structured metadata for this dataset.

Returns the DatasetMetadata object from the centralized catalog, or None if metadata is not available for this dataset.

- Returns:

The metadata object containing acquisition parameters, participant demographics, experiment details, and documentation. Returns None if no metadata is registered for this dataset.

- Return type:

DatasetMetadata | None

Examples

>>> from moabb.datasets import BNCI2014_001 >>> dataset = BNCI2014_001() >>> dataset.metadata.participants.n_subjects 9 >>> dataset.metadata.acquisition.sampling_rate 250.0