moabb.evaluations.CrossSessionEvaluation#

- class moabb.evaluations.CrossSessionEvaluation(paradigm: BaseParadigm, datasets: list[BaseDataset] | None = None, random_state: int | None = None, n_jobs: int = 1, overwrite: bool = False, error_score: str | float = 'raise', suffix: str = '', hdf5_path: str | None = None, additional_columns: list[str] | None = None, return_epochs: bool = False, return_raws: bool = False, mne_labels: bool = False, n_splits: int | None = None, cv_class: type | None = None, cv_kwargs: dict | None = None, save_model: bool = False, cache_config: CacheConfig | None = None, optuna: bool = False, time_out: int = 900, verbose: bool | str | int | None = None, codecarbon_config: dict | None = None)[source]#

Cross-session performance evaluation.

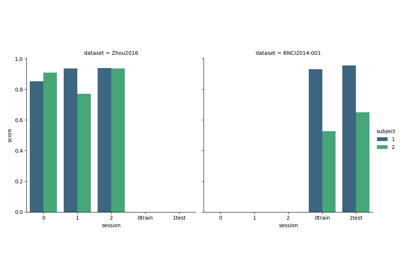

Evaluate performance of the pipeline across sessions but for a single subject. Verifies that there is at least two sessions before starting the evaluation.

- Parameters:

paradigm (

BaseParadigm) – The paradigm to use.datasets (list of

BaseDataset) – The list of dataset to run the evaluation. If none, the list of compatible dataset will be retrieved from the paradigm instance.random_state (int or None) – If not None, can guarantee same seed for shuffling examples. Defaults to

None.n_jobs (int) – Number of jobs for fitting of pipeline. Defaults to

1.overwrite (bool) – If true, overwrite the results. Defaults to

False.error_score (str or float) – Value to assign to the score if an error occurs in estimator fitting. If set to

'raise', the error is raised. Defaults to"raise".suffix (str) – Suffix for the results file.

hdf5_path (str) – Specific path for storing the results and models.

additional_columns (None) – Adding information to results.

return_epochs (bool) – Use MNE epoch to train pipelines. Defaults to

False.return_raws (bool) – Use MNE raw to train pipelines. Defaults to

False.mne_labels (bool) – If returning MNE epoch, use original dataset label if True. Defaults to

False.save_model (bool) – Save model after training, for each fold of cross-validation if needed. Defaults to

False.cache_config (

CacheConfigor None) – Configuration for caching of datasets. Seemoabb.datasets.base.CacheConfigfor details. Defaults toNone.

Notes

Added in version 1.1.0: Add save_model and cache_config parameters.

- evaluate(dataset: BaseDataset, pipelines: dict, param_grid: dict | None, process_pipeline, postprocess_pipeline=None)[source]#

Evaluate results on a single dataset.

This method return a generator. each results item is a dict with the following conversion:

res = {'time': Duration of the training , 'dataset': dataset id, 'subject': subject id, 'session': session id, 'score': score, 'n_samples': number of training examples, 'n_channels': number of channel, 'pipeline': pipeline name}

- is_valid(dataset: BaseDataset) bool[source]#

Verify the dataset is compatible with evaluation.

This method is called to verify dataset given in the constructor are compatible with the evaluation context.

This method should return false if the dataset does not match the evaluation. This is for example the case if the dataset does not contain enough session for a cross-session eval.

- Parameters:

dataset (

BaseDataset) – The dataset to verify.